BLOG

Author: Atharva Kawale

Date: 17 April 2026

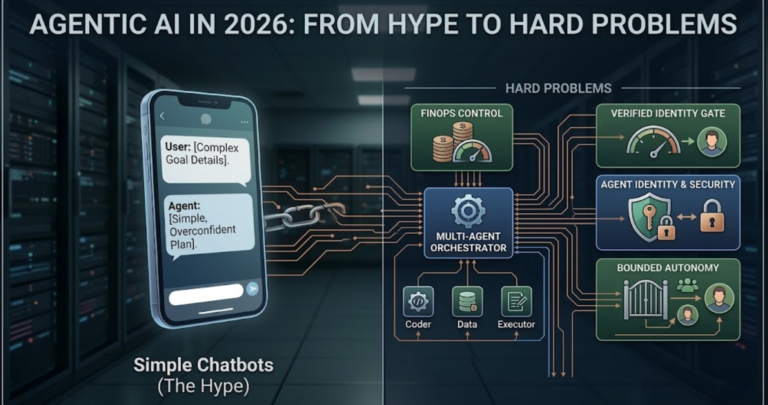

Agentic AI has evolved from a buzzy concept to a brutal engineering discipline in 2026. Explore the hard realities of multi-agent orchestration, FinOps, and the bounded autonomy required to ship reliable autonomous systems.

We all have that one internal company Slack channel dedicated to agentic prototypes that never actually saw the light of production. The video demos are always flawless, showing a model chaining thoughts together, hitting a third-party API, and gracefully handling a synthetic edge case.

But the moment someone asks to deploy that exact same agent against live customer data or write-access databases, the engineering room goes incredibly quiet. The central tension in our industry right now is not about what language models can fundamentally do, as the raw reasoning capability is undeniably here.

The actual tension is that our organizational architectures, security perimeters, and financial operations practices are entirely broken for systems that think and act continuously. We are effectively trying to hire a wildly fast, hyper-competent junior colleague, but we are handing them an unlimited corporate credit card, zero onboarding manuals, and no direct manager.

Only about twenty-five percent of organizations actively experimenting with agents have actually scaled them to production environments. The rest of the industry is currently stuck in proof-of-concept purgatory, slowly discovering that autonomy is terrifying when you haven’t engineered the boundaries first.

Let us draw a hard, uncompromising line between the old world and the new world, and stop calling every language model wrapped in a simple Python script an agent. As highlighted in NexGen Architects’ February 2026 industry predictions, the transition from generative to Agentic AI represents a fundamental architectural boundary.

The industry is just now starting to ship real agents simply because developers needed time to internalize that this is an entirely new computing paradigm. Integrating an autonomous agent is not remotely like calling a static REST API where the payload structure is guaranteed, and execution is strictly linear.

It requires massive amounts of behavioral research, brutal testing, and continuous iteration just to get a baseline workflow functioning reliably. Furthermore, the underlying logic is intensely fragile due to severe model-prompt lock-in across the ecosystem.

A meticulously crafted system prompt that drives flawless multi-step reasoning on one frontier model will routinely fail, hallucinate, or loop infinitely when hot-swapped to a competitor’s model. This means engineering teams are forced into endless cycles of continuous improvement and re-verification rather than simply shipping new features.

A legitimate agent rests on four non-negotiable architectural pillars to survive this fragility. First is deep goal understanding, meaning the system extracts business intent and specific success criteria rather than just following a literal text prompt.

Second is dynamic step decomposition combined with active re-planning on failure. When an API call inevitably times out or returns bad data, a true agent evaluates the failure context, adjusts its internal plan, and attempts an alternate execution route instead of just throwing a stack trace.

Third is the raw ability to execute tools, hit authenticated APIs, and run generated code directly within a secure sandboxed environment. Finally, an agent absolutely requires state and memory persistence across different sessions.

It must remember what specific action failed yesterday to avoid repeating the exact same expensive mistake today. If your application architecture lacks any of these four pillars, you are simply building a conversational chatbot.

Trying to force a single massive frontier model to handle planning, tool execution, and final output generation simultaneously is a guaranteed recipe for brittle logic and a massive cost explosion. Single-agent designs fail aggressively at scale because they create immediate coordination bottlenecks.

When engineers stuff too many system tools, operational guidelines, and conversation contexts into a single massive prompt window, the model inevitably loses focus. It hallucinates critical parameters, forgets its initial instructions, and falls into infinite execution loops.

The necessary architectural shift that defines our current reality is multi-agent orchestration, where highly specialized, narrowly scoped agents handle specific domains under the strict direction of a central coordinator. Think of it as the natural evolution from monolithic web applications to distributed microservices.

Just as microservices decoupled deployment cycles and scaling limits, multi-agent systems decouple cognitive reasoning. A researcher agent handles the messy data extraction, a coder agent writes the necessary integration script, and an executor agent physically runs it, all while the primary orchestrator keeps the global state intact.

But the microservices analogy severely breaks down when you consider deterministic behavior. Standard microservices communicate via rigid, highly predictable APIs; agents unfortunately communicate via natural language or intermediate JSON states, which introduces massive probabilistic failure points. Frameworks like LangGraph, Google ADK, and CrewAI have matured specifically to handle this chaotic internal routing, forcing deterministic state machines onto probabilistic actors.

According to Gartner, there was a staggering 1,445 percent surge in multi-agent system inquiries from the first quarter of 2024 to the second quarter of 2025, proving engineering teams have finally realized that scaling enterprise autonomy requires splitting the brain.

The dirty secret of this entire wave is that over forty percent of enterprise agentic projects get unceremoniously killed before they ever touch a production server. The core models are not the bottleneck anymore, but rather, organizations and legacy business processes are.

You can easily build a system that flawlessly and autonomously resolves complex customer disputes in a vacuum. But if the surrounding business cannot legally or operationally support automated financial action, the project dies on the vine.

There are four brutally real reasons these initiatives fail, and none of them involve latency metrics or benchmark scores. First is the reality of runaway inference and orchestration costs. When developers build agents without inherent financial controls, a simple logic loop can burn thousands of dollars over a long weekend.

Second is the glaring lack of clear business ownership over agent outcomes. The engineering department builds the agent, but business leaders refuse to sign off on the deployment because they fundamentally do not understand the probabilistic risks involved.

Third, teams consistently attempt to deploy without robust runtime controls and deep auditability. When an autonomous agent randomly deletes a user record, there is often no traceable, human-readable log showing exactly which LLM call made that decision and why.

Finally, engineers simply cannot explain or defend automated decisions to non-technical stakeholders. If a routing agent denies a customer refund based on a completely hallucinated policy, and the engineering team cannot immediately point to the specific logic branch that failed, institutional trust evaporates instantly.

We are actively staring down a global agentic market projected to grow rapidly from 7.8 billion dollars today to well over 52 billion by 2030, yet we are largely ignoring the fundamental engineering roadblocks. The industry focus must desperately shift away from raw capability and toward operational constraints.

A traditional web application makes a highly predictable, mathematically quantifiable database query. A single autonomous agent might make hundreds or thousands of expensive LLM calls continuously just to resolve one highly ambiguous user request.

Cost control must become a strict architectural primitive, not just an operational afterthought patched on by finance at the end of the month. Practical engineering patterns are emerging to solve this hemorrhage of cash, starting heavily with model tiering.

You must use heavy, expensive reasoning models strictly for the initial plan-and-execute orchestration phase, and then immediately farm out the repetitive sub-tasks to smaller, radically cheaper execution models. Caching vector database responses and intermediate execution steps drastically reduces redundant inference overhead.

Beyond caching, serious teams are implementing strict budget token controls and dollar-based enforcement at the API gateway layer using robust tools like LiteLLM. This echoes MachineLearningMastery’s January 2026 analysis of agentic trends, which firmly positioned inference cost management as the primary technical hurdle for the year.

System identity has definitively replaced raw data as the primary security boundary in the enterprise. As our agents interact with internal billing systems and source code repositories on behalf of actual users, static roles and traditional perimeter security fall apart entirely.

According to February 2026 threat research from Barracuda Networks, complex agent impersonation and advanced prompt injection are unequivocally the primary attack vectors for the modern enterprise. A malicious external user does not need to brutally breach your SQL database anymore; they just need to cleverly convince your customer service agent that they are the CEO.

The only viable solution direction requires a massive shift to purpose-bound permissions and continuous intent verification. Agents must operate under strict, dynamically generated service accounts that permanently expire the exact moment the session ends. Runtime policy enforcement must physically sit between the agent’s generated action and the actual API execution.

Technical governance is no longer a boring compliance checkbox; it is a massive competitive advantage for those who get it right. Organizations that establish strict governance rules early are the exact ones deploying into high-value, revenue-generating workflows faster and exponentially more safely.

The ultimate goal here is bounded autonomy, which means defining crystal clear authority limits and deterministic escalation paths directly to human operators. You must rigorously instrument the boundaries of the model’s confidence.

If an agent hits a self-reflection confidence threshold anywhere below ninety percent, it must automatically pause execution and route a contextual request to a human orchestrator. This strict philosophy applies everywhere, from automated software pipelines to Physical AI applications in heavy manufacturing and global logistics, where autonomous robotic systems are finally moving from flashy demos to active, high-stakes pilots.

The engineering teams actually winning and scaling in 2026 share a very distinct, heavily disciplined technical DNA. First, they do not simply layer shiny new agents onto broken legacy processes, but rather, they fundamentally tear down and redesign the entire workflow strictly for agents.

The historical human role has formally shifted from manual task executor to high-level agent orchestrator. We are routinely seeing complex B2B customer response times drop from 42 hours down to near real-time, precisely because the entire operational pipeline was rebuilt from scratch to assume a machine is doing the initial routing.

These highly successful teams treat orchestration cost as a first-class engineering concern, actively halting continuous integration builds if the unit economics of a test agent run too high. They also define agent authority strictly before deployment, rather than waiting for a rogue API call to break a production database.

Most importantly, they instrument their agents the exact same way they instrument traditional microservices, demanding distributed traces for every thought loop and structured JSON logs for every tool execution. Google Cloud’s enterprise survey highlighted that 88 percent of these highly disciplined early adopters are already seeing massive, positive return on investment.

Furthermore, as major brands adapt to Answer Engine Optimization (AEO), these elite teams are completely restructuring their internal data to be natively machine-readable. Commercetools reinforced this exact architectural necessity in their February 2026 report on agentic commerce, noting that brands failing to optimize for machine buyers will simply vanish from autonomous supply chains.

The uncomfortable reality is that the era of building toy agents in a Jupyter notebook is completely over. The coming year will not reward the engineering teams with the most enthusiastic early adopters, nor will it reward the cleverest zero-shot prompt engineering.

It will ruthlessly reward the organizations with the clearest system architecture, the most impenetrable data governance, and the ironclad discipline to let machines act without ever surrendering operational control. We have successfully built the cognitive engines; now we have to lay down the heavy steel tracks.

Before you blindly kick off your next multi-agent initiative or approve a massive infrastructure budget, you need to answer one fundamental, highly uncomfortable question. If your primary orchestrator hallucinates at two in the morning and decisively decides to rewrite your production database, what exact architectural guardrail is going to physically stop it?

Atharva Kawale

Atharva is a dynamic Software Engineer with 3 years of experience at the intersection of Python development, DevOps, and AI/ML. Passionate about solving real-world problems through code, he’s increasingly focused on data analytics, ML pipelines, and intelligent automation.

A dedicated AI enthusiast, Atharva actively explores new models, frameworks, and workflows while staying on top of the latest trends through tech blogs and open-source communities.

SHARE THIS ARTICLE

Stay up to date on latest trend in video tech

Related Posts

To provide the best experiences, logituit.com use technologies like cookies to store and/or access device information. Consenting to these technologies will allow us to process data such as browsing behaviour or unique IDs on this site. Not consenting or withdrawing consent, may adversely affect certain features and functions.